Airsys launches new European hub in Hungary to enhance cooling solutions

February 16, 2026

How Much Water Does a Data Center Use?

February 17, 2026Discussions about the data center market often focus on aggregate growth, rising demand, expanding capacity, and strong revenue projections. Evidently, CNBC reported that more than $61 billion flowed into the data center sector in 2025, setting a new record.

But these figures reflect the industry as a whole. What do they actually mean for an individual data center’s operators, owners, and investors? How do those headline numbers translate into returns at the facility level?

The unfortunate reality is that while the industry has never generated more revenue or operated more efficiently, profitability at the individual data center level is far from guaranteed.

How is this possible? The answer lies in a misunderstanding of what actually drives revenue in the data center in the first place.

How Do Data Centers Make Money?

What Does an AI Data Center Actually Sell?

A hospital makes money by delivering healthcare services. A shoe store makes money by selling shoes. A restaurant makes money by selling food.

But what does today’s data center sell?

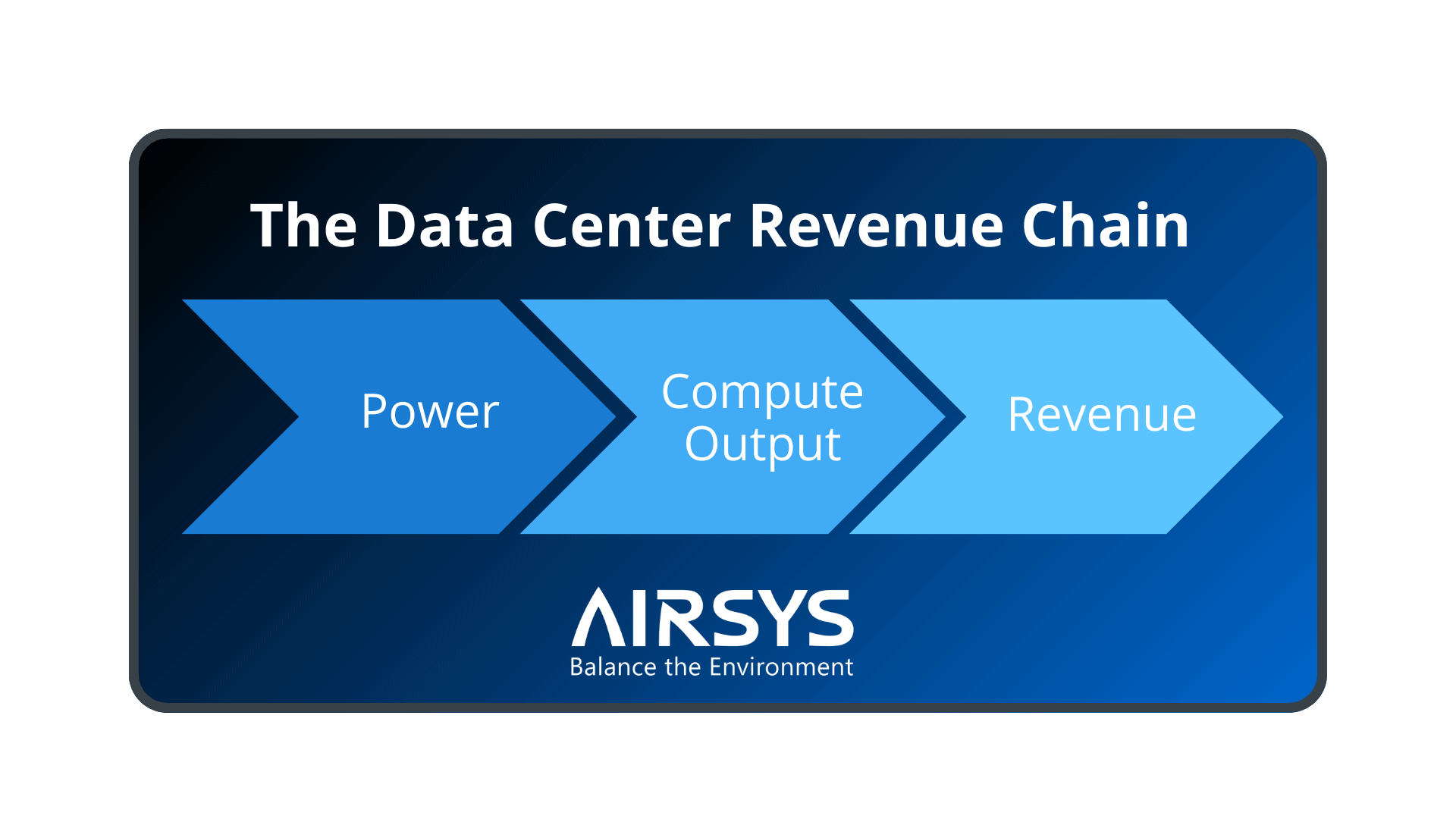

At their core, data centers sell compute.

Just as a restaurant’s purpose is to serve food, data centers are built to deliver compute capacity, which forms the basis of their revenue.

Compute Is the Product

Whether the business model is cloud, colocation, or wholesale, the product customers ultimately pay for is access to processing power. In other words, the ability to run applications, train models, serve content, and move data reliably at scale.

Space, power, cooling, and connectivity are not the end product; they are the infrastructure required to make computing available, usable, and continuous.

Real Estate vs. Computing as Revenue Drivers

Data centers have traditionally been described as “real estate businesses”, renting racks, cabinets, cages, or even suites within shared facilities, and in hyperscale environments, leasing entire buildings.

Yet, as workloads shift toward AI, the business model has evolved from leasing space or physical infrastructure to enabling high-density compute delivery. Data center tenants are paying for environments that reliably support high-performance compute, and so the commercial value is tied to sustained compute performance vs. floor space or rack count

The Barrier to Achieving Revenue Targets

Power sits at the start of the revenue chain for data centers. It enables compute output and, by extension, data center revenue. That said, power also introduces two fundamental challenges that directly impact profitability:

- Power efficiency reduces cost, not revenue.

Since energy costs account for the largest share of ongoing expenses, data centers have historically focused on power efficiency to control operating costs. These efforts, however, are effective at reducing power spending, but they don’t necessarily increase earnings. - Power availability directly limits compute output.

Power is the fuel that enables computing. Without sufficient and reliable power, workloads cannot run, and computing cannot be produced. The problem is that power is a finite source, so any constraint on power availability immediately constrains compute output.

When power is constrained or inefficiently activated at the outset, the effects cascade downstream.

Power Efficiency ≠ Effectiveness

Power efficiency can reduce overhead within the existing power supply, but it does not necessarily increase the power available to support additional workloads. In fact, in many facilities, limited visibility into redundant capacity, idle infrastructure, or underutilized servers means power is consumed without contributing to compute output.

To make this more tangible, a Lawrence Berkeley National Laboratory report found that up to 30% of servers in a typical data center may be “zombie” servers — systems that consume energy while serving no useful purpose.

This distinction highlights the gap between efficiency and effectiveness. Power efficiency measures how little energy is wasted, while power effectiveness measures power productivity, or how much of that power can be transformed into sustained, productive compute.

Power Efficiency ≠ Profitability

Because compute output drives revenue, any power that cannot be converted into usable compute represents lost earning potential. This is why a power-efficient facility with a PUE of 1.2 may control costs well and improve margins, yet still fall short in actual, revenue-yielding computing productivity. Efficiency metrics can look strong on paper, but they are often misleading, masking the financial reality of power performance.

This is the core paradox facing modern data centers: strong energy efficiency metrics can coexist with stranded capacity and missed revenue opportunities. As AI workloads push density higher and power availability tighter, profitability increasingly depends not on how efficiently power is consumed, but on how effectively that power is monetized through compute.

Evaluating Power Performance in a Revenue-Driven Era

As data center investment increases, operators need to measure power performance through a revenue lens. When performance is evaluated primarily through efficiency indicators, decisions are often made based on perceived progress rather than real operational capability and actual revenue potential.

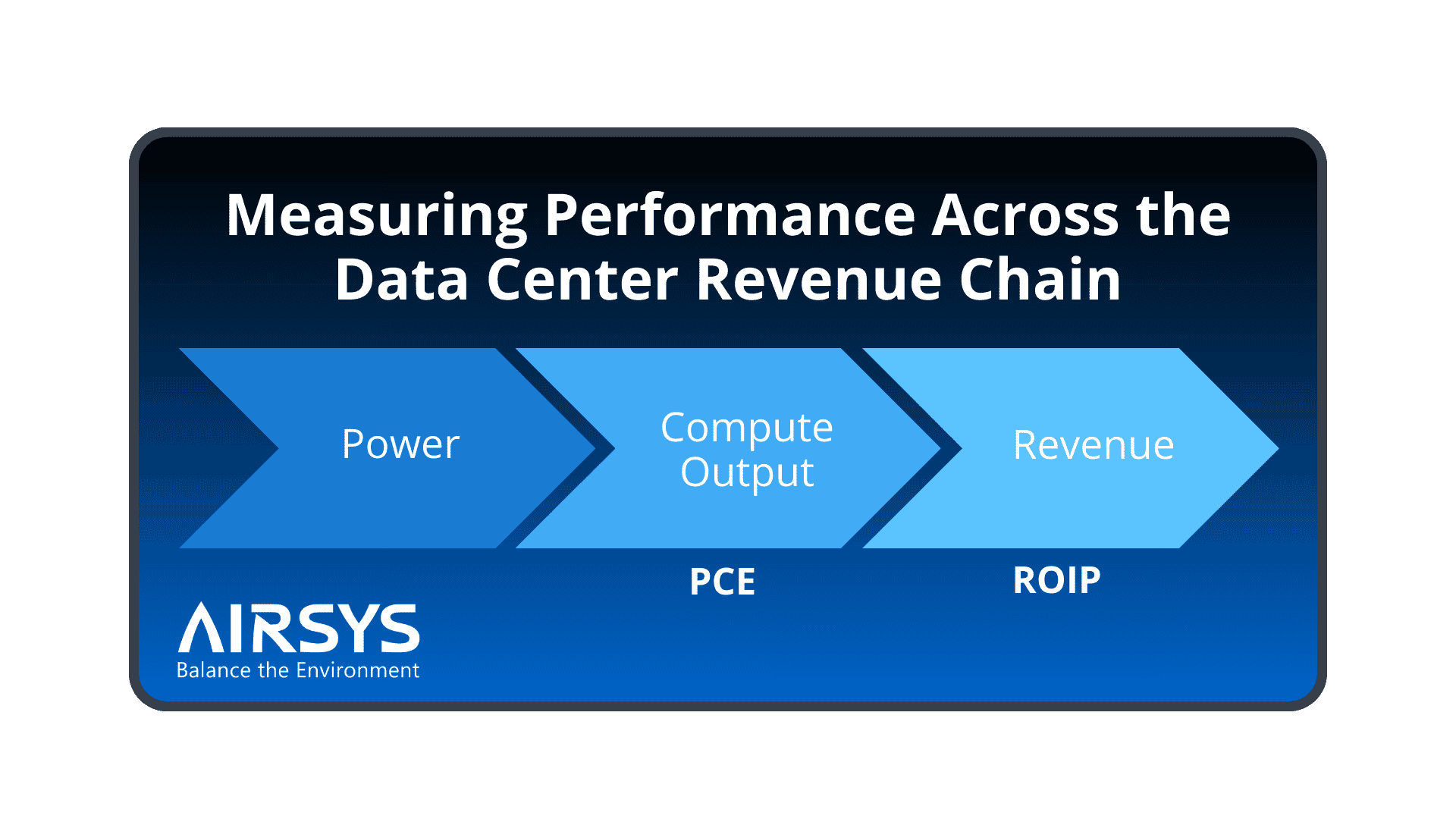

To evaluate performance in a compute-driven market, PUE must be paired with additional metrics that capture how effectively power is activated and converted into usable compute. Together, all three indicators provide a clearer basis for operational, financial, and expansion decisions.

Power Compute Effectiveness: How Much Power Becomes Compute

Power Compute Effectiveness (PCE) reveals how much of a data center’s provisioned power becomes real compute, exposing the gap between available capacity and productive output. This makes PCE the first variable to monitor when assessing financial performance in power-constrained environments.

- Formula: IT Load (kW) / Total Power Capacity (kW provisioned)

- Direction: Higher is better

- Range: 0.50 (inefficient) to 0.90+ (highly effective)

Return on Invested Power: What You Earn from Every Kilowatt

Return on Invested Power (ROIP) connects power effectiveness directly to revenue outcomes, measuring the financial return on each kilowatt invested. High ROIP means power is producing revenue-generating compute, while low ROIP indicates megawatts are being consumed with little economic output. This level of financial visibility is beyond what efficiency metrics are designed to provide.

- Formula: Revenue or Compute Value ($ or TFLOPs) / Power Invested (kW) (total active power used)

- Direction: Higher is better

Applying PCE and ROIP to Cooling Strategy

Once PCE and ROIP are established as metrics for evaluating how effectively power is converted into compute and revenue, the next question is how to use them. Cooling provides a clear and practical starting point, as it is one of the largest power consumers in the data center and one where changes are quickly reflected in performance.

Specifically, cooling is the second-largest energy consumer in a data center, accounting for roughly 40% of total facility power consumption. Because it consumes such a significant share of power, cooling optimization can meaningfully reduce constraints at the start of the revenue chain and allow more power to be directed toward revenue-generating compute workloads.

Viewed through PCE and ROIP, reductions in cooling power make it easy to see how more provisioned power reaches the IT load (PCE) and how that power generates financial returns (ROIP).

Case Study: Measuring Compute and Revenue Impact

The following case study demonstrates how PCE and ROIP can be used to evaluate power performance in the context of cooling strategy. The data compares a 10 MW legacy data center before and after retrofitting with Airsys’ LiquidRack™ spray cooling.

By applying PCE and ROIP, the impact of cooling optimization becomes measurable across operational performance and business results. These metrics show how much additional compute was enabled within the same power footprint, and how effectively that power translated into revenue.

| Metric | Before: Legacy Mechanical Cooling | After: AIRSYS LiquidRack™ |

|---|---|---|

| Total Facility Power | 10 MW | 10 MW |

| Cooling Power Consumption | 5–6 MW | ~1 MW |

| Infrastructure Power | 1–2 MW | ~5–6 MW |

| Available IT Load | ~10 MW | ~15 MW |

| Power Compute Effectiveness (PCE) | 0.63 | 0.93 |

| Return on Invested Power (ROIP) | Baseline | +35% |

How to Ensure Your Data Center Hits Revenue Targets?

Meeting revenue targets in today’s data center environment starts with understanding what to measure and how to measure it. Practically, data center operators and investors are advised to redefine their performance priorities:

- Measure how much provisioned power actually translates into usable, revenue-generating output.

- Identify and eliminate sources of stranded power, including idle servers, oversized cooling, and unnecessary electrical overhead.

- Optimize cooling architecture to expand usable IT load and revenue potential without waiting for additional utility power.

- Design infrastructure and operations to scale compute effectiveness even when power availability cannot scale.

- Adopt performance metrics such as PCE and ROIP that reflect real operational capability and financial impact.

Airsys helps data center operators navigate power constraints with advanced cooling solutions that activate more usable power for IT workloads. With such a cooling foundation in place, the industry growth can also translate into measurable revenue at the facility level. Contact us today to evaluate how cooling optimization can improve your revenue performance.