The Year Power Stopped Being the Bottleneck and Became the Question

January 14, 2026

Building a dynamic cooling ecosystem for the AI factory era

January 25, 2026Liquid cooling is no longer a futuristic option – it’s an immediate need for AI-driven network transformation. As chip densities push thermal envelopes beyond the physics of air cooling, operators must adopt more advanced thermal strategies. Modular, compressorless architectures are directly tackling the costly “energy trap,” a phenomenon where cooling infrastructure consumes up to 60% of a facility’s total energy. To maximize effectiveness and operational efficiency, operators have an opportunity to use new metrics, working alongside Power Usage Effectiveness (PUE) and adopting Power Compute Effectiveness (PCE). Liquid cooling innovations provide the key to this transformation, demonstrating an ability to unlock stranded power and maximize revenue-generating compute capacity without requiring massive, expensive capital expansion.

The Uptime Institute estimates that the average data center rack power density increased by 38% from 2022 to 2024, with the steepest growth in AI and hyperscale deployments. Today’s data center operators require modular, multi-medium cooling architecture designed to scale from 1MW edge deployments to 100+ MW hyperscale environments. Liquid cooling provides the modular architecture needed and can be retrofitted for both smaller legacy data centers and newer hyperscale environments, while allowing for a hybrid approach.

Single-Phase Spray Cooling

Traditional cooling solutions struggle with high energy consumption, limited scalability, and rising operational costs.

As we think about current and future data center design systems, a cooling standard for AI data centers has emerged: direct-to-chip (D2C). There are a few different versions of D2C cooling, but single-phase is the most common. However, D2C systems typically use compressors in their cooling systems, with a CDU that separates the chilled water loop or facility loop from the technical loop or coolant loop going to the rack or the server. Many times with compressor-based architectures, operators must design for worst-case conditions where the compressors are fully loaded, wasting energy that could otherwise be used. For data centers in northern climates, this is especially inefficient, as many times the compressors are not running at full capacity due to the climate, but must be accounted for anyway. This is a clear example of the efficiency difference between “normal” operations, where the data center is usually running, compared to what the data center was built to allocate, leading to the concept of stranded power.

A single-phase, rack-based, liquid spray cooling architecture is specifically built for AI-era deployments, enabling closed-loop, compressor-less systems that can be paired with dry coolers, utilizing free cooling in demanding or water-scarce climates. In this case, liquid cooling sprays directly on a chip or GPU rather than going through a cold plate, bringing chilled water or a facility loop all the way to the rack. This is possible due to the server being fully enclosed within a liquid spray cooling cassette. Unlike immersion-based cooling, single-phase spray cooling fully encloses the server inside a cassette, allowing for higher levels of heat rejection compared to a traditional single-phase D2C system. This also eliminates the CDU because the pump and the heat exchanger can be brought to the rack or cassette level. When chilled water temperatures are raised enough, the compressors found in more traditional applications can be removed, resulting in higher PCE, lower peak PUE, and reduced stranded power.

Turning Stranded Power into Computing Capacity and Revenue

Stranded power, or ineffective power, as mentioned above, is the result of data center designs based on the worst-case scenarios. These worst-case scenarios are rarely, or sometimes never, realized. As a result, the data center may be using chillers when a dry cooler could be used instead. Taking away the compressors eliminates much of that stranded power.

By eliminating compressor-based cooling overhead, operators can redirect previously allocated infrastructure power toward revenue-generating compute workloads. As operators adopt single-phase spray cooling, they’ll reap sustainability benefits, while also unlocking stranded power and transforming it into additional computing capacity and revenue.

Tracking Effectiveness: The Case for PCE

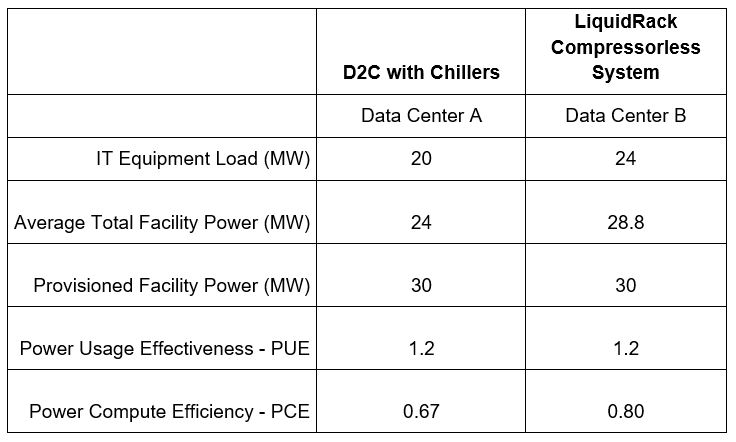

Traditionally, PUE and WUE are used to track liquid cooling effectiveness. However, as data center designs and needs evolve, so must the terminology. Power Compute Effectiveness (PCE) is an emerging performance metric for AI-era data centers. While PUE typically measures facility cooling efficiency, PCE is a ratio of power being allocated for compute compared to the overall power capacity of the data center. The higher the score, the more compute that is delivered without adding megawatts, infrastructure, or delay. PCE is powerful in that it is a ratio that helps measure the amount of power that generates revenue.

Think of it this way: If a data center is a delivery fleet, PUE measures how fuel-efficient the trucks are (the facility), but it doesn’t tell you if the trucks are actually carrying any cargo. By comparison, PCE measures how many packages are actually delivered per gallon of fuel (the compute output). By sticking with traditional air cooling, operators are essentially burning 60 percent of their fuel just to keep the empty trucks running cool, rather than delivering the ‘cargo’ of AI processing.

Example of Direct-to-Chip Data Center with Chiller compared to Compressorless System

Table 1 – Data center power comparison between D2C with Chiller and Compressorless System

Click to enlarge

Operators can still use PUE as an effective performance metric, but PUE should be measured in combination with PCE. Together, they provide a complete view of both efficiency and output, enabling operators to maximize compute density, accelerate time to capacity, and minimize capital expansion.

As the AI data center revolution continues, liquid cooling has gone from a niche technology to the industry standard for hyperscale workloads, AI training clusters, and sustainable data center builds. These efficient and effective compute environments are only made possible by liquid cooling. Measuring success using PCE alongside PUE represents a forward-thinking industry shift because it tracks not just how efficiently power is used, but how effectively it translates into revenue-generating compute capacity.